What is MCP.so?

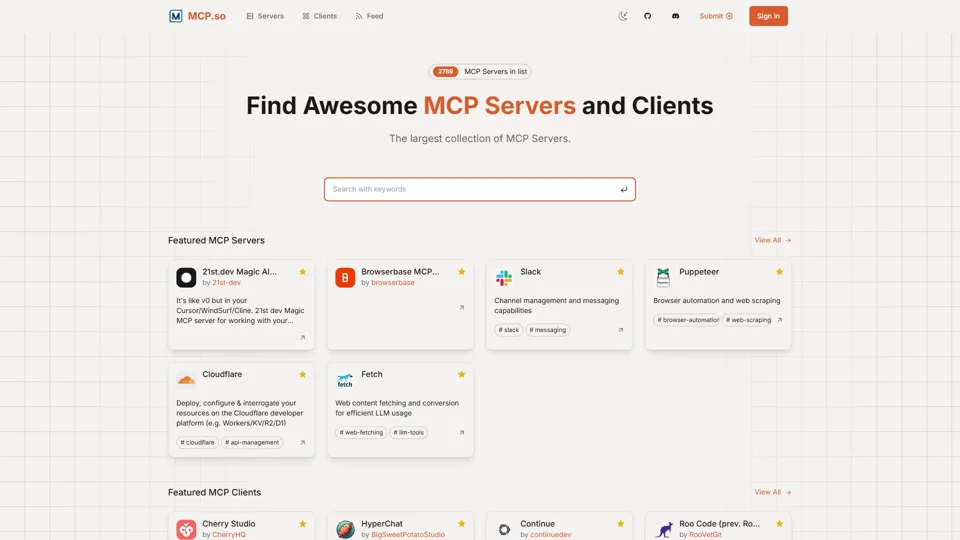

MCP.so is the largest community-driven directory of Model Context Protocol (MCP) servers and clients, serving as a centralized hub for developers and AI practitioners to discover, share, and integrate AI-powered tools. It enables seamless connections between AI systems like Anthropic’s Claude and external data sources, APIs, and productivity tools through standardized protocols.

Key Features of MCP.so

-

Extensive Server Repository

Access 2,789+ MCP servers across 200+ categories, including browser automation (Puppeteer), cloud services (Cloudflare), and messaging tools (Slack). -

Client Integration Support

Explore 50+ MCP clients like HyperChat and Cherry Studio for implementing AI workflows in code editors and chat applications. -

Open-Source Collaboration

Submit and showcase custom MCP servers/clients via GitHub integration, fostering community-driven AI tool development. -

Secure Protocol Compliance

Leverage built-in security with 1:1 encrypted connections, server-controlled authentication, and data privacy safeguards.

How MCP.so Enhances AI Development

-

Real-Time Data Access

Connect AI models to live data sources (e.g., Slack messages, web content via Fetch server) for context-aware responses. -

Tool Chaining

Combine servers like Puppeteer (web scraping) and Cloudflare (API management) to automate complex workflows. -

IDE Integration

Use clients like Zed and Roo Code to embed AI agents directly into development environments for code assistance. -

Enterprise Scalability

Supports local MCP servers for testing and prepares for enterprise-grade remote server deployments.

Pricing Structure

MCP.so operates as a free community resource with optional enterprise-tier features:

-

Free Tier: Unlimited server discovery, GitHub-based submissions, and basic client integrations.

-

Enterprise Plans (Coming 2025): Advanced server analytics, priority support, and custom protocol extensions.

Helpful Tips for Maximizing MCP.so

-

Tag Filtering

Use tags like#browser-automationor#llm-toolsto quickly find specialized servers. -

Starter Kits

Clone template repositories like Magic MCP to accelerate server development. -

Claude Integration

Pair with Claude Desktop for local MCP server testing before deploying to production. -

Community Channels

Join the Discord server for updates on new servers like Browserbase’s headless browsing tool.

Frequently Asked Questions

How does MCP differ from OpenAI’s GPTs?

MCP provides standardized, secure access to live data and tools, whereas GPTs focus on custom chatbot interfaces without native protocol-level integrations.

Can I use MCP servers with non-Anthropic models?

Yes – clients like HyperChat and 5ire support multiple LLMs through the protocol’s open-source specification.

What security measures protect my data?

Servers maintain autonomous control over resources, enforce OAuth-style authentication, and never share raw API keys with AI providers.

How do I troubleshoot server connections?

Use the MCP Starter Guide and validate configurations with the Fetch server’s web content toolkit.

Are commercial applications allowed?

Yes, but ensure compliance with each server’s licensing terms (e.g., Cloudflare’s enterprise API restrictions).

Keyword Focus: ### Model Context Protocol (MCP)

The Model Context Protocol revolutionizes AI development by standardizing how LLMs interact with external systems. Unlike traditional API integrations requiring custom code for each tool, MCP:

-

Unifies Tool Access

- Browser automation (Puppeteer server)

- Cloud infrastructure (Cloudflare server)

- Knowledge bases (5ire client)

- Through a single protocol layer.

-

Enables Cross-LLM Compatibility

Servers work interchangeably with Claude, GPT-4, and open-source models via clients like Continue.dev.

-

Solves Hallucination Challenges

By grounding responses in live data from authenticated servers, reducing reliance on static training data.

-

Accelerates Enterprise Adoption

Audit trails via server-side logging and granular permission controls address compliance requirements.

Developers building MCP servers gain access to 2789+ potential integration points, while enterprises benefit from reduced AI implementation costs through protocol standardization.